My research is focused on developing safe and reliable AI systems.

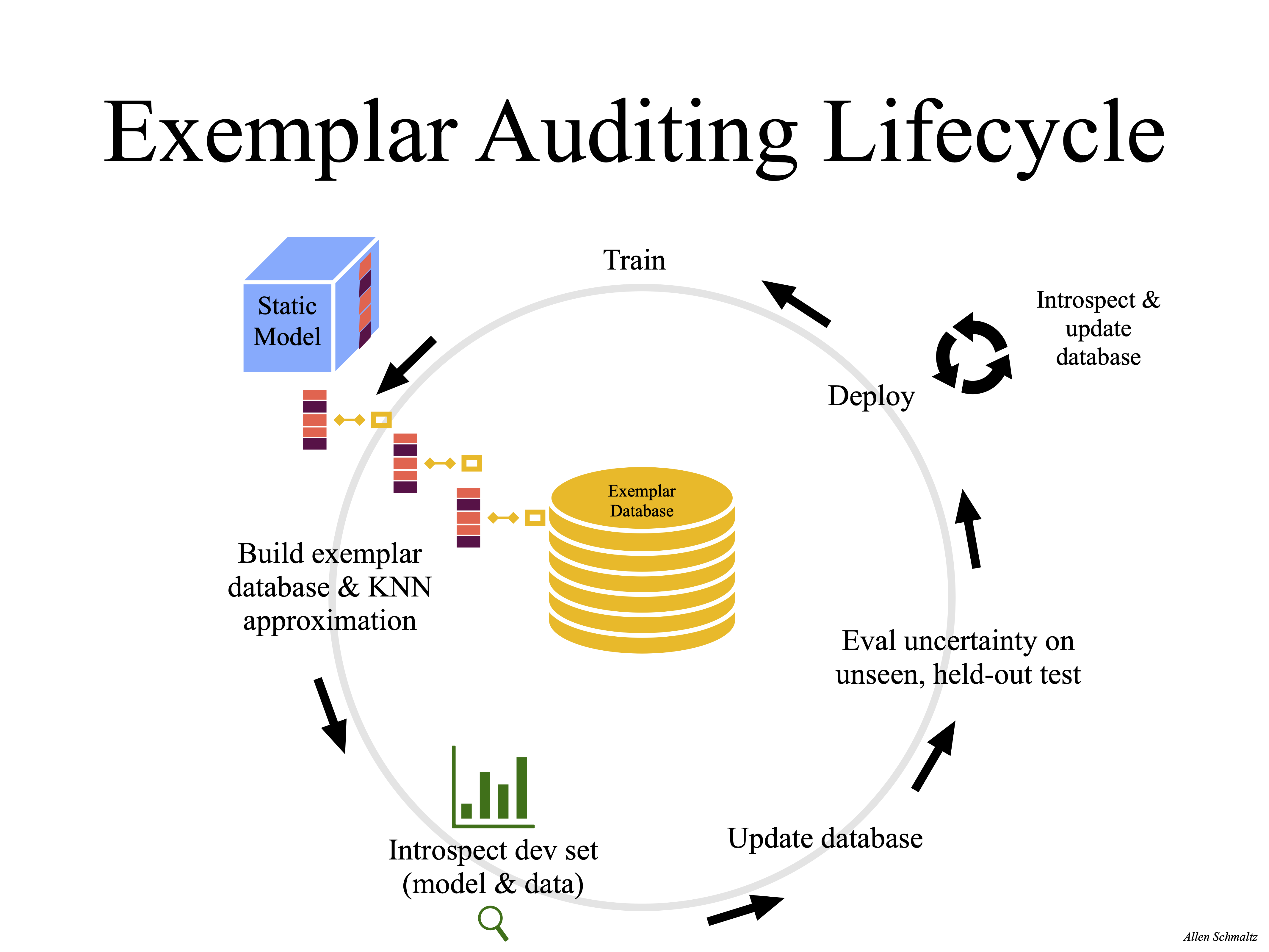

After completing my Ph.D. in 2019 in Computer Science at Harvard, I worked in the Department of Epidemiology to bring deep learning research to the Harvard medical community, and the medical field, more broadly. Toward this end, I developed a series of new approaches for understanding, analyzing, and updating neural networks and their associated datasets. Prospectively, these approaches will eventually underpin the reliable deployment of neural networks for medical applications.

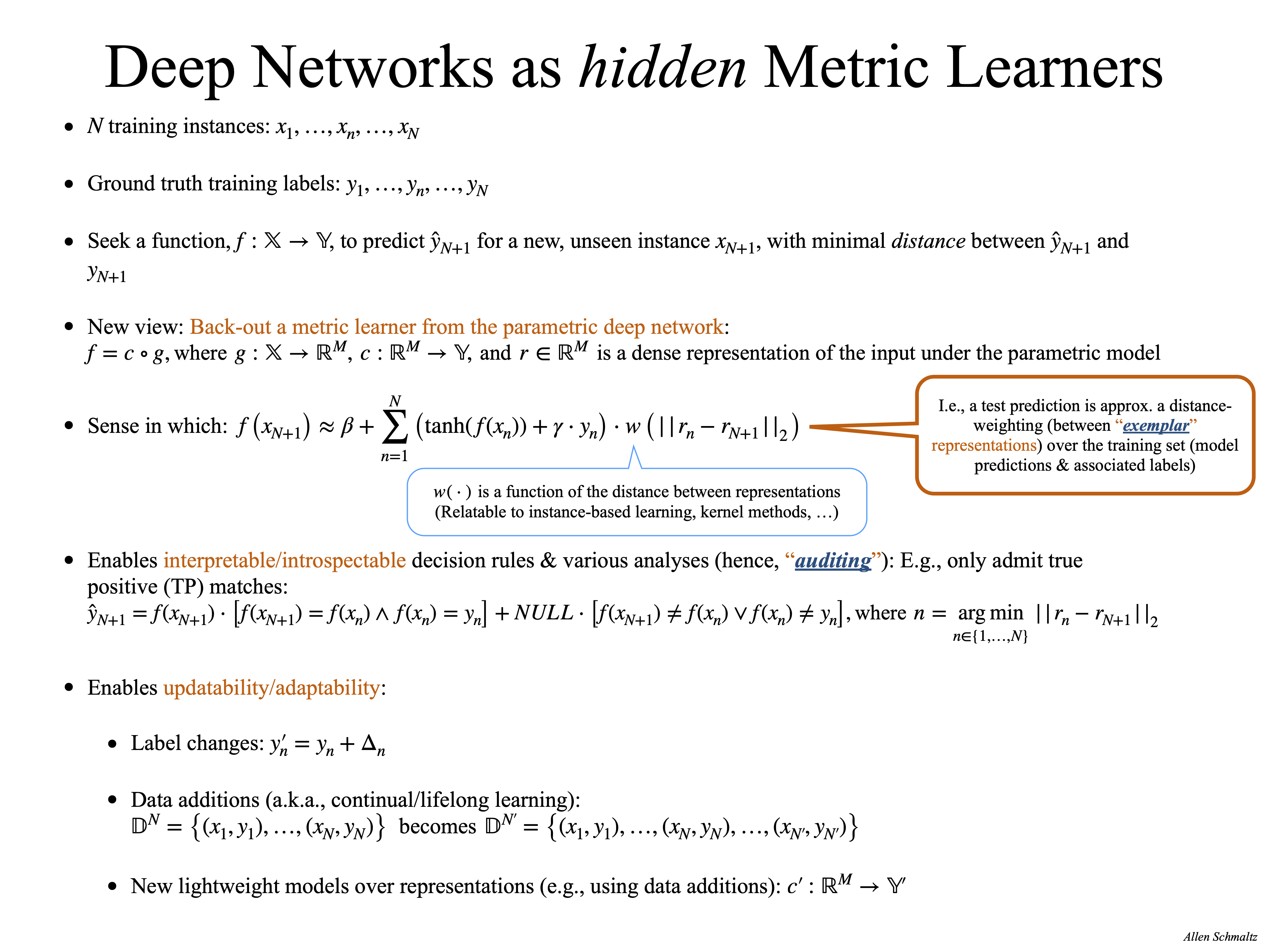

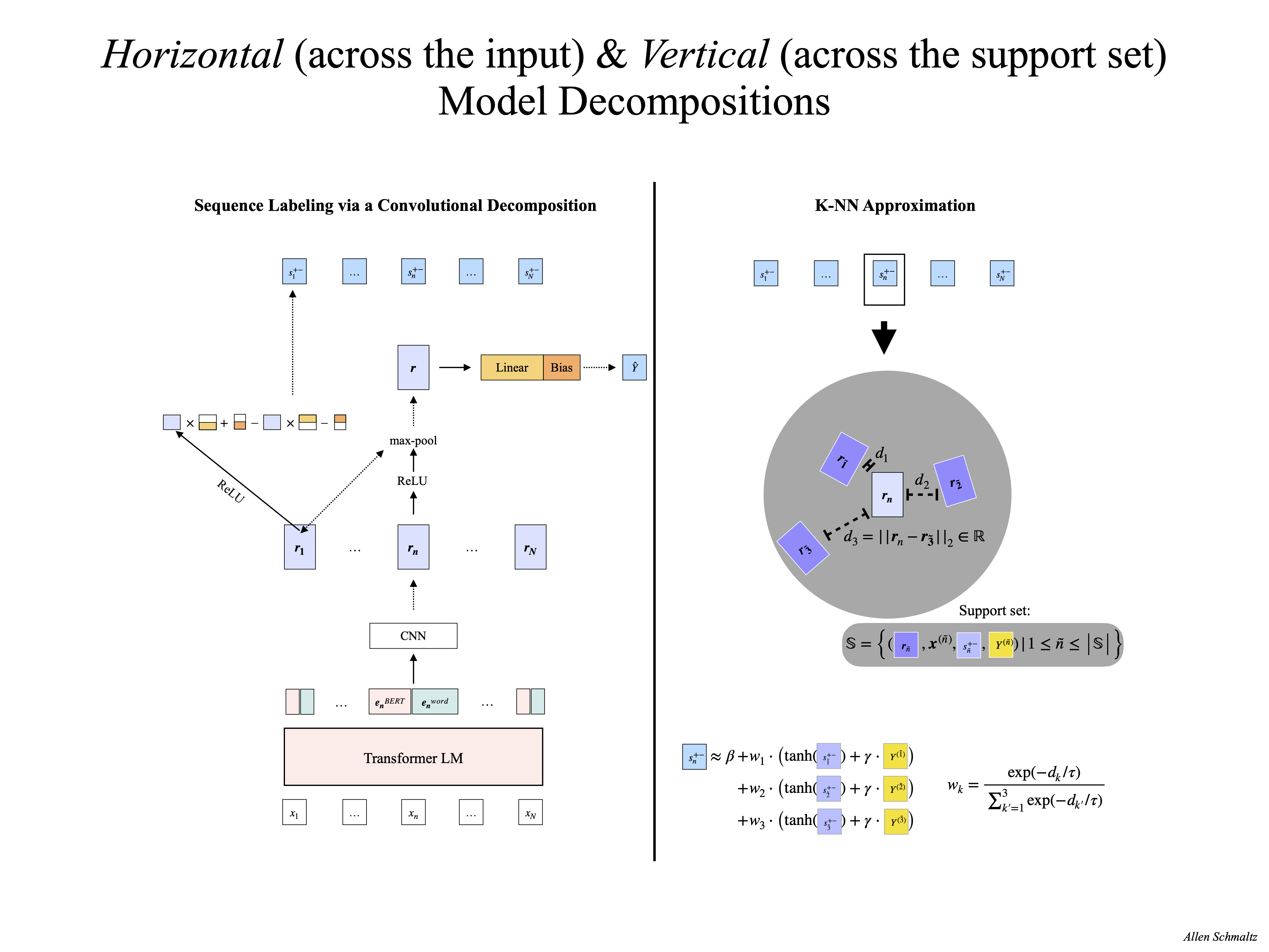

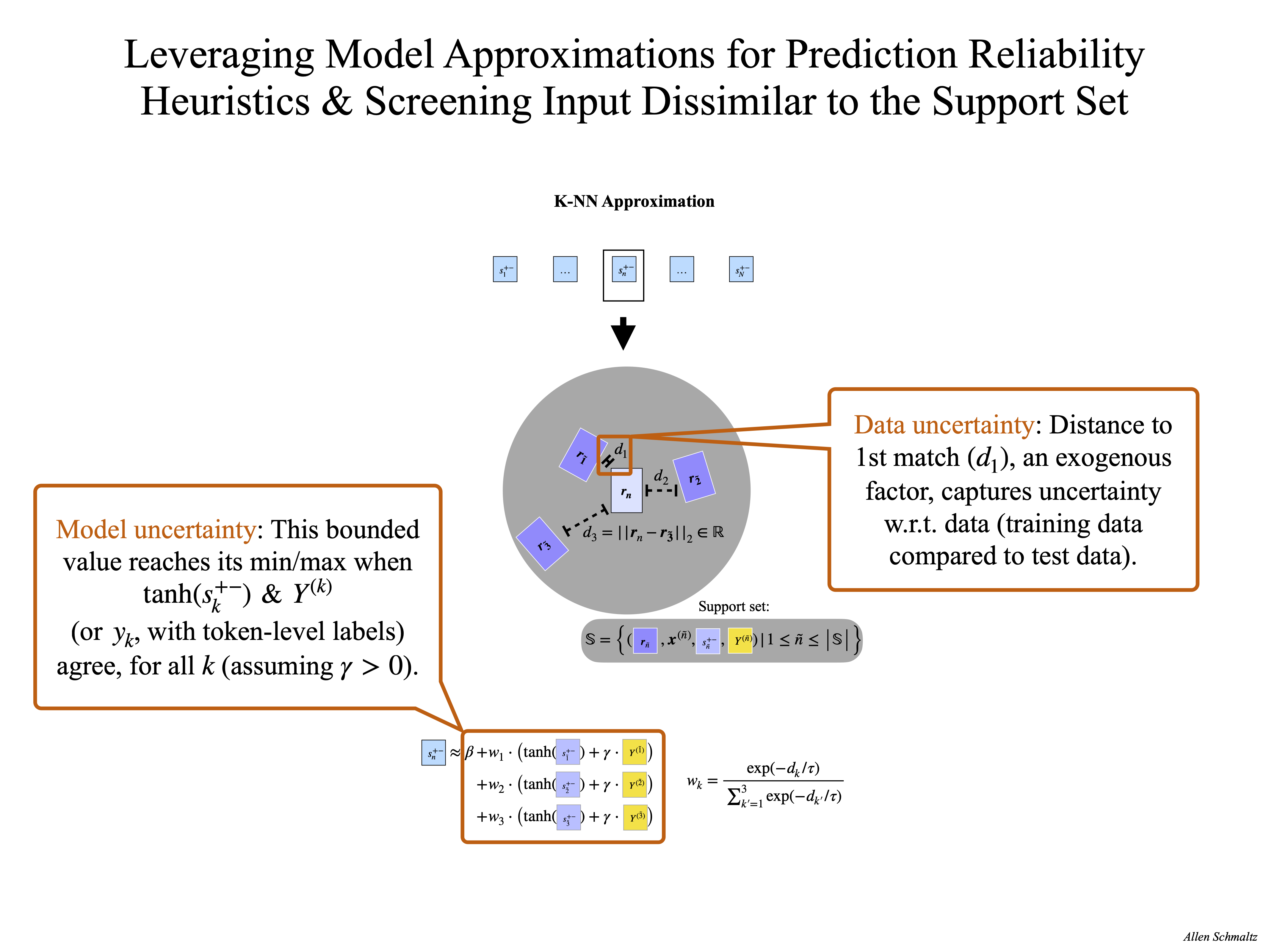

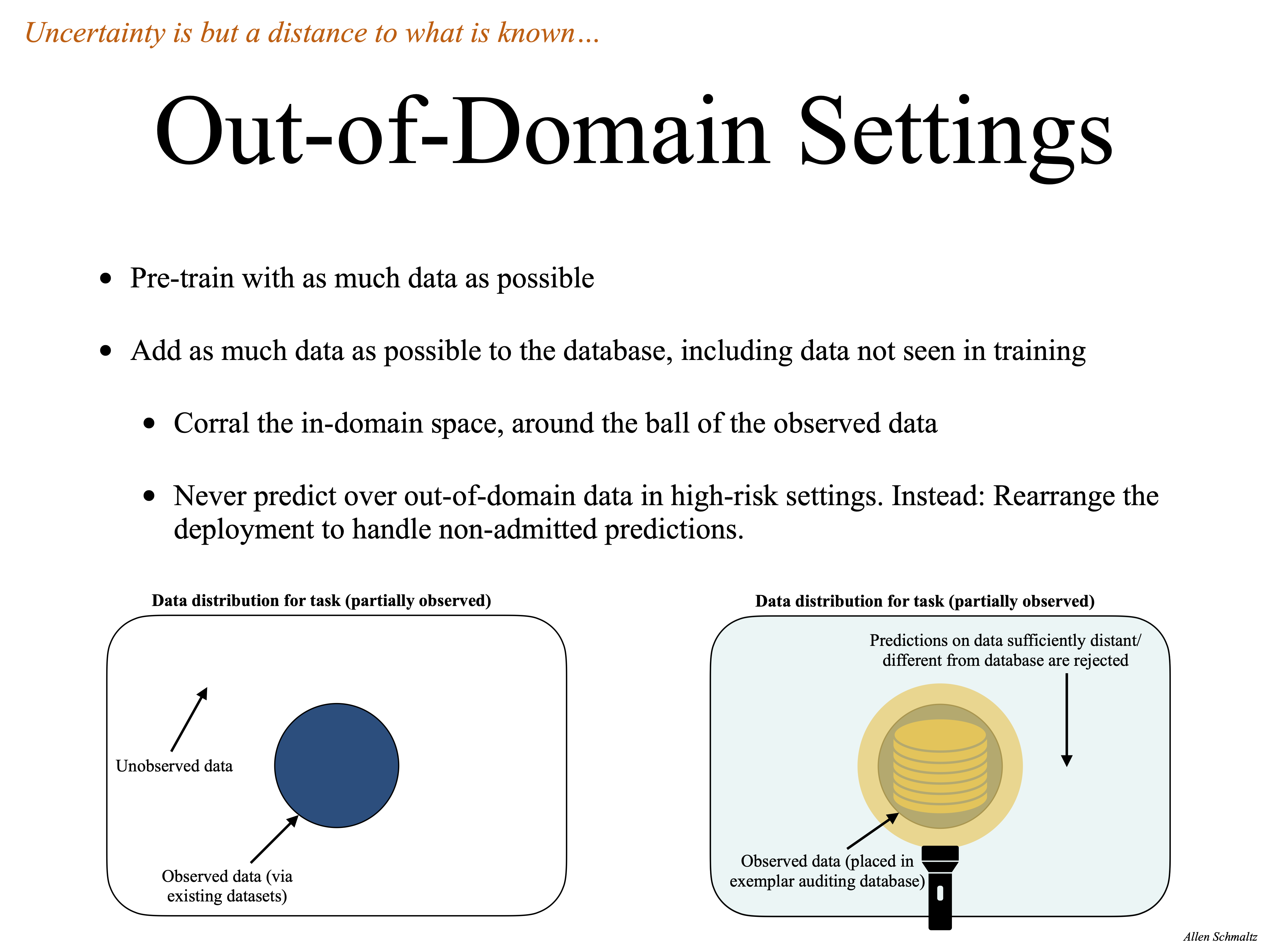

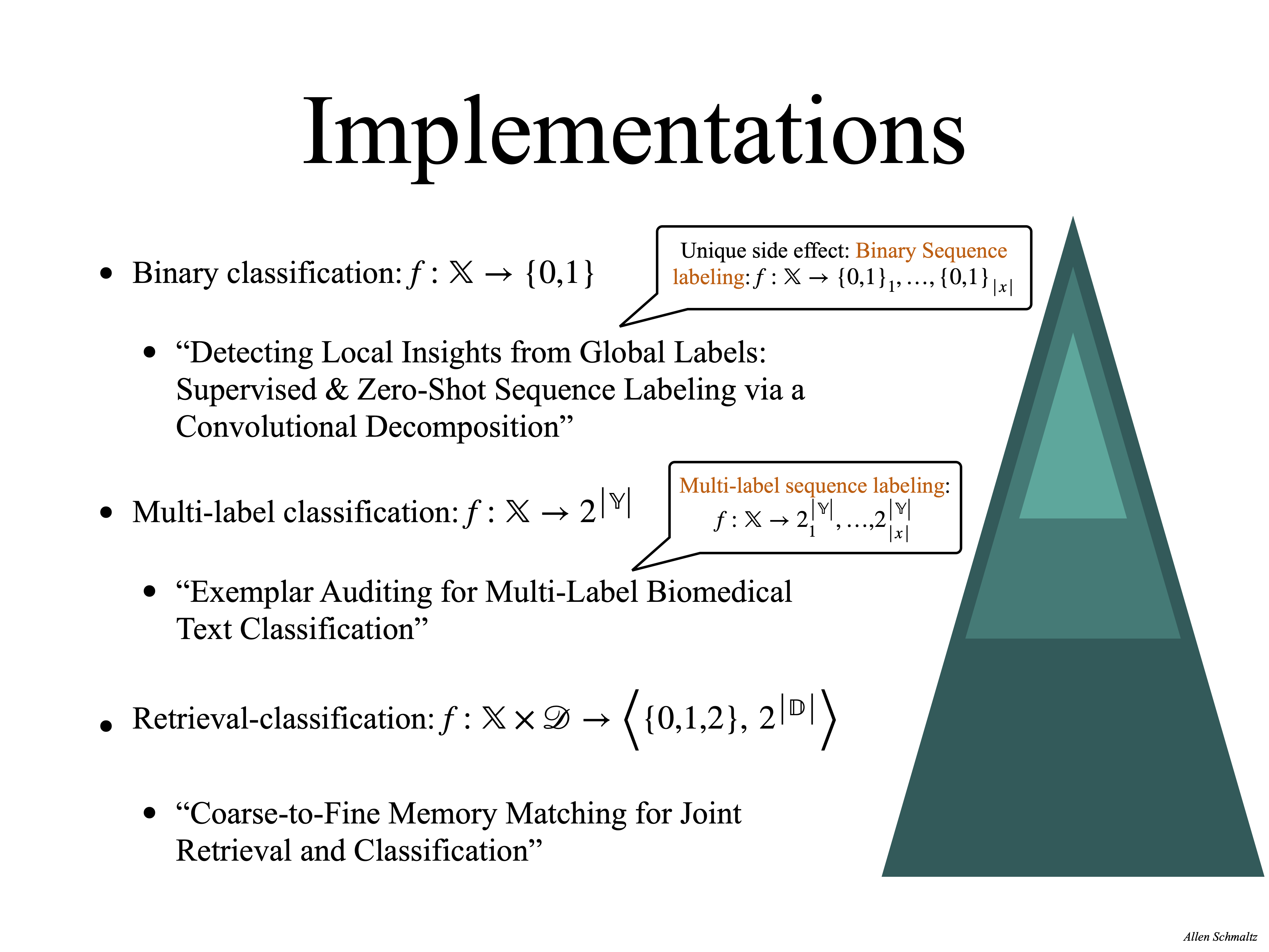

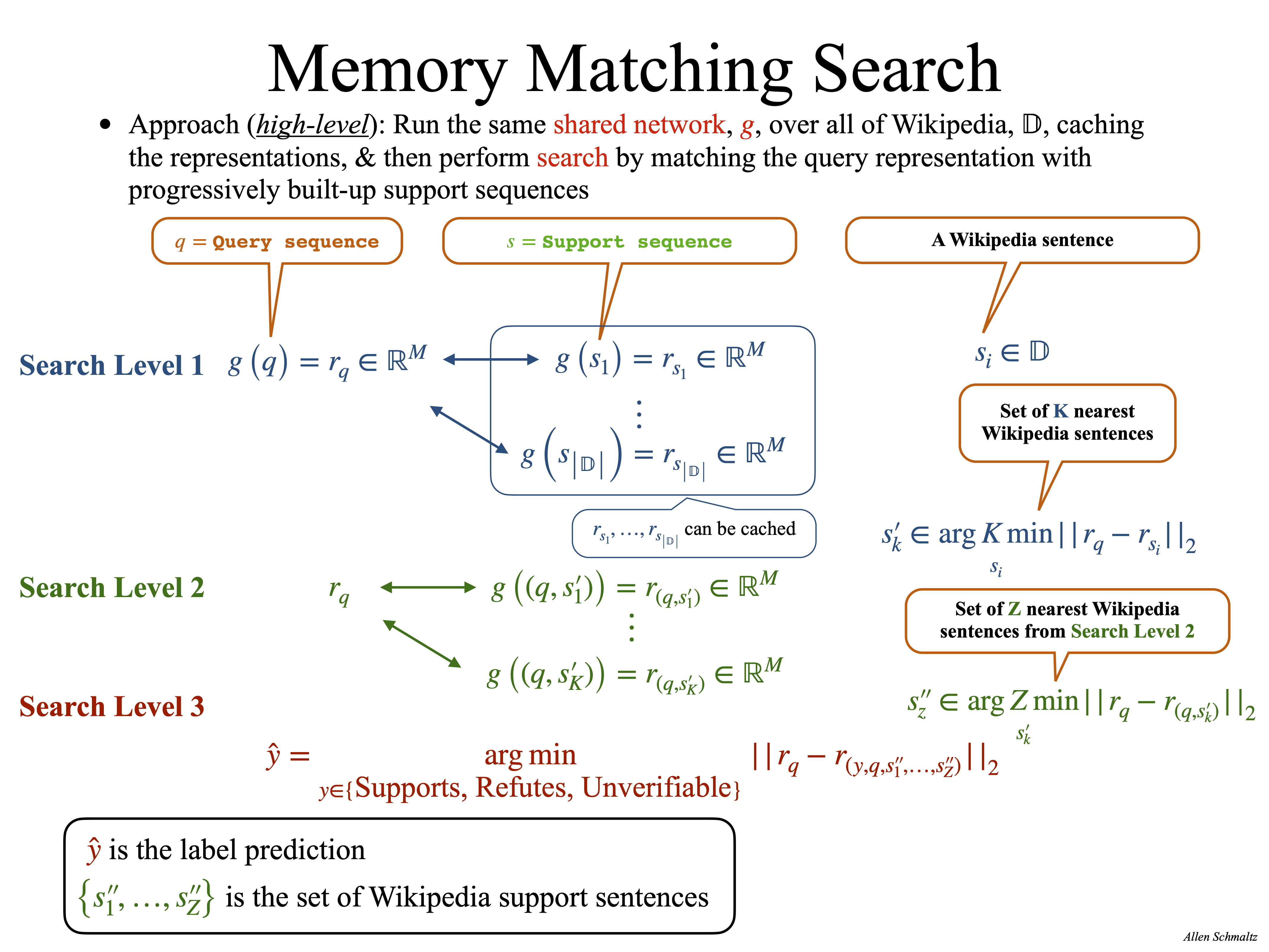

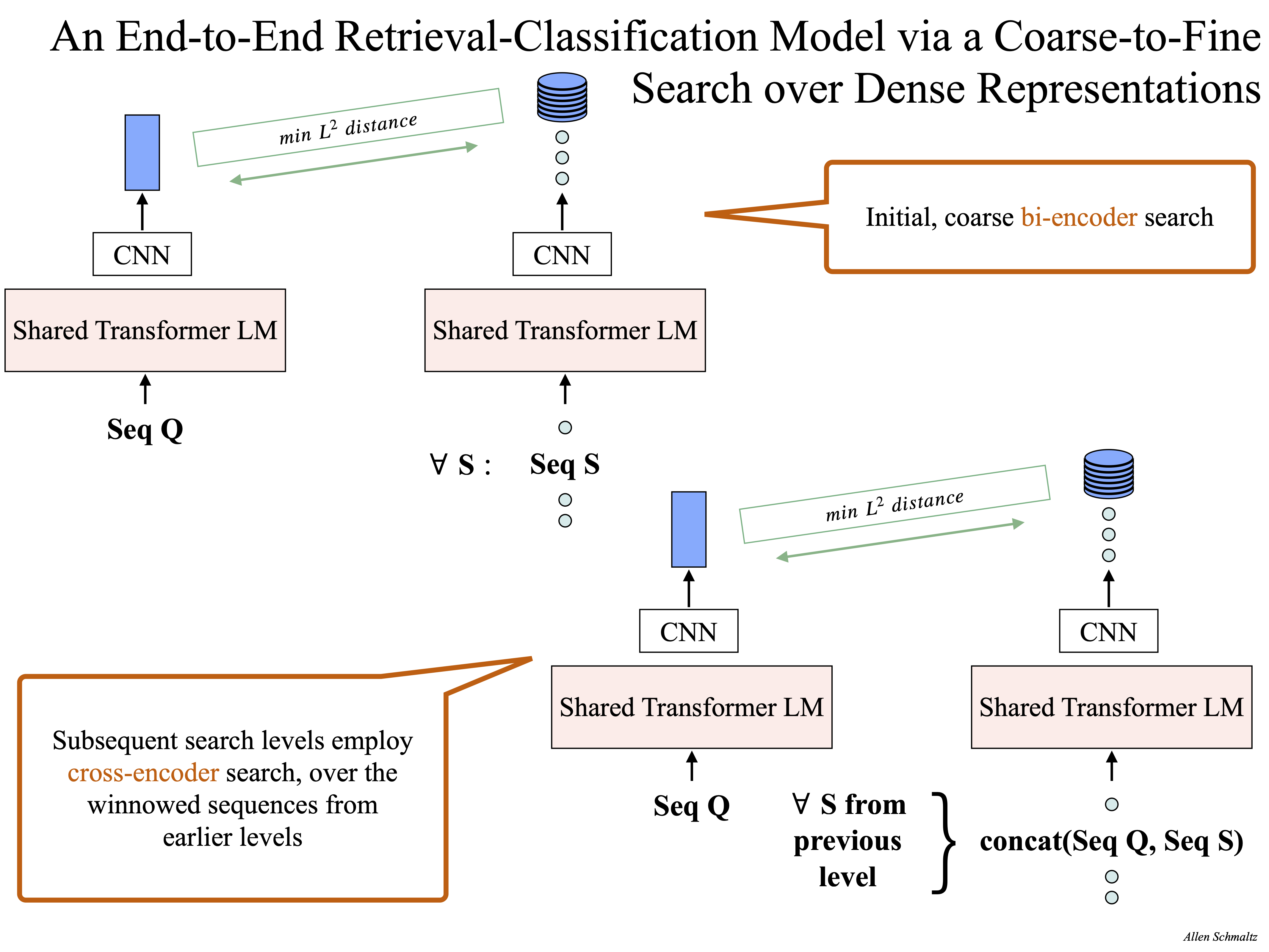

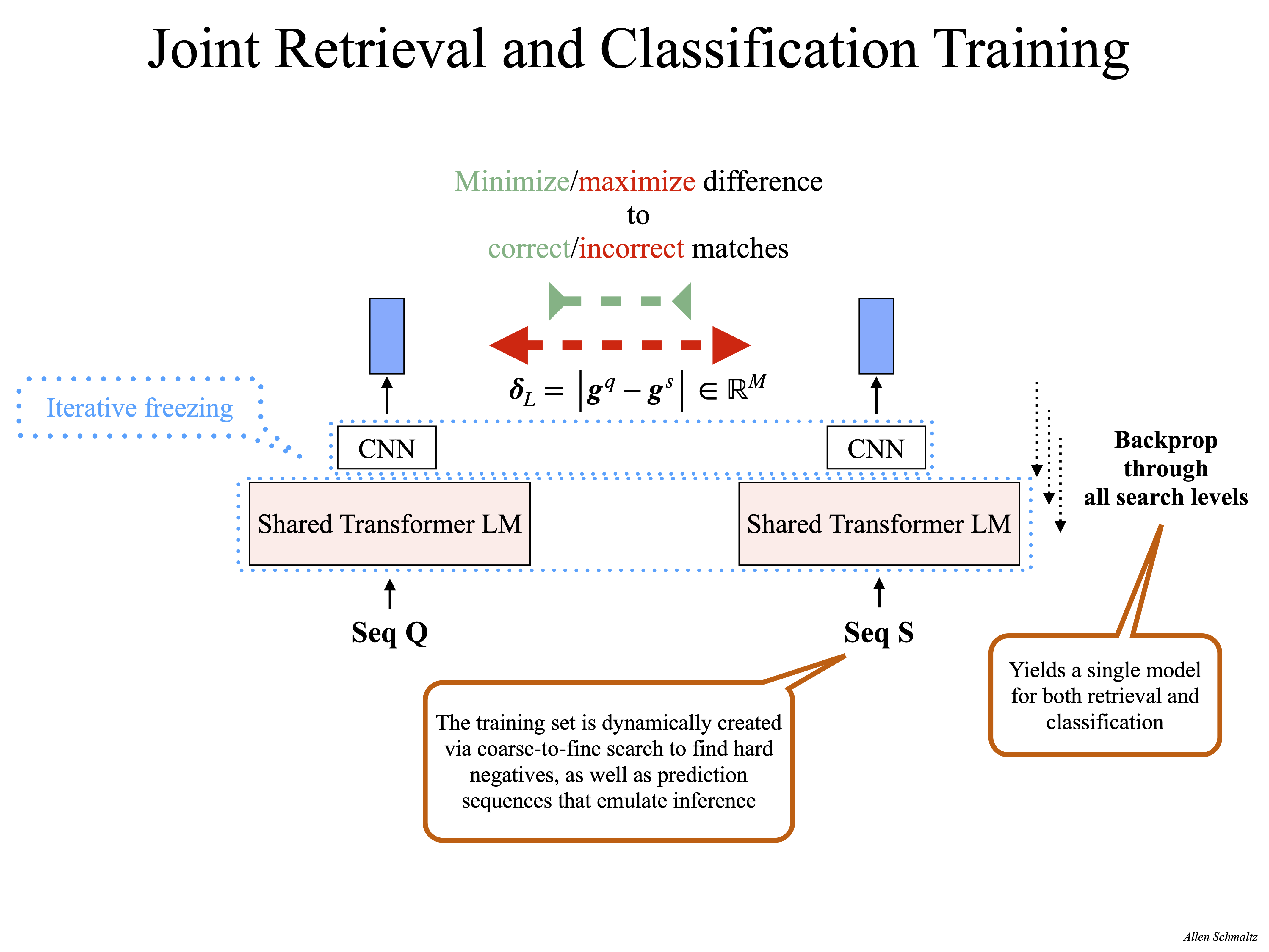

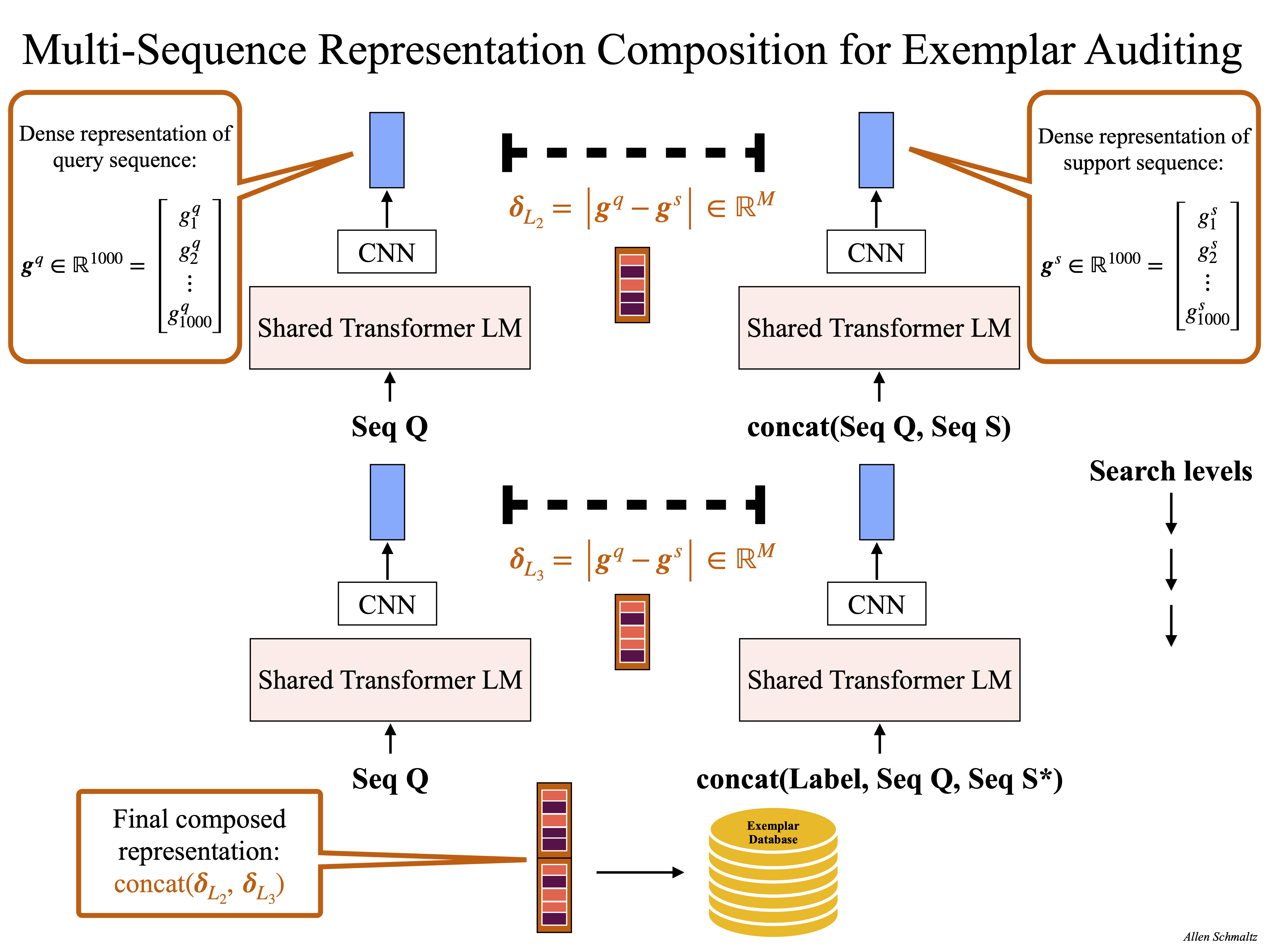

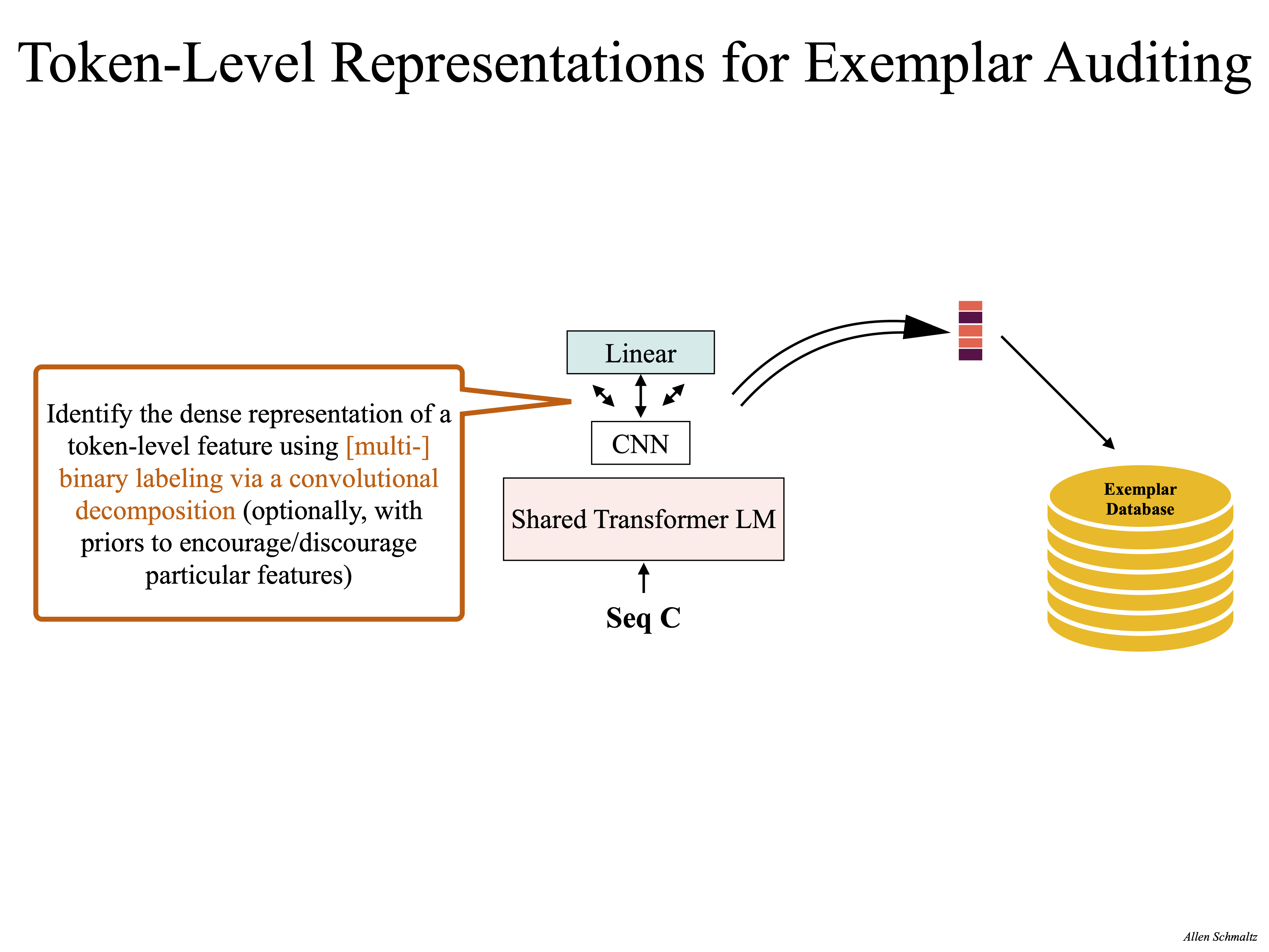

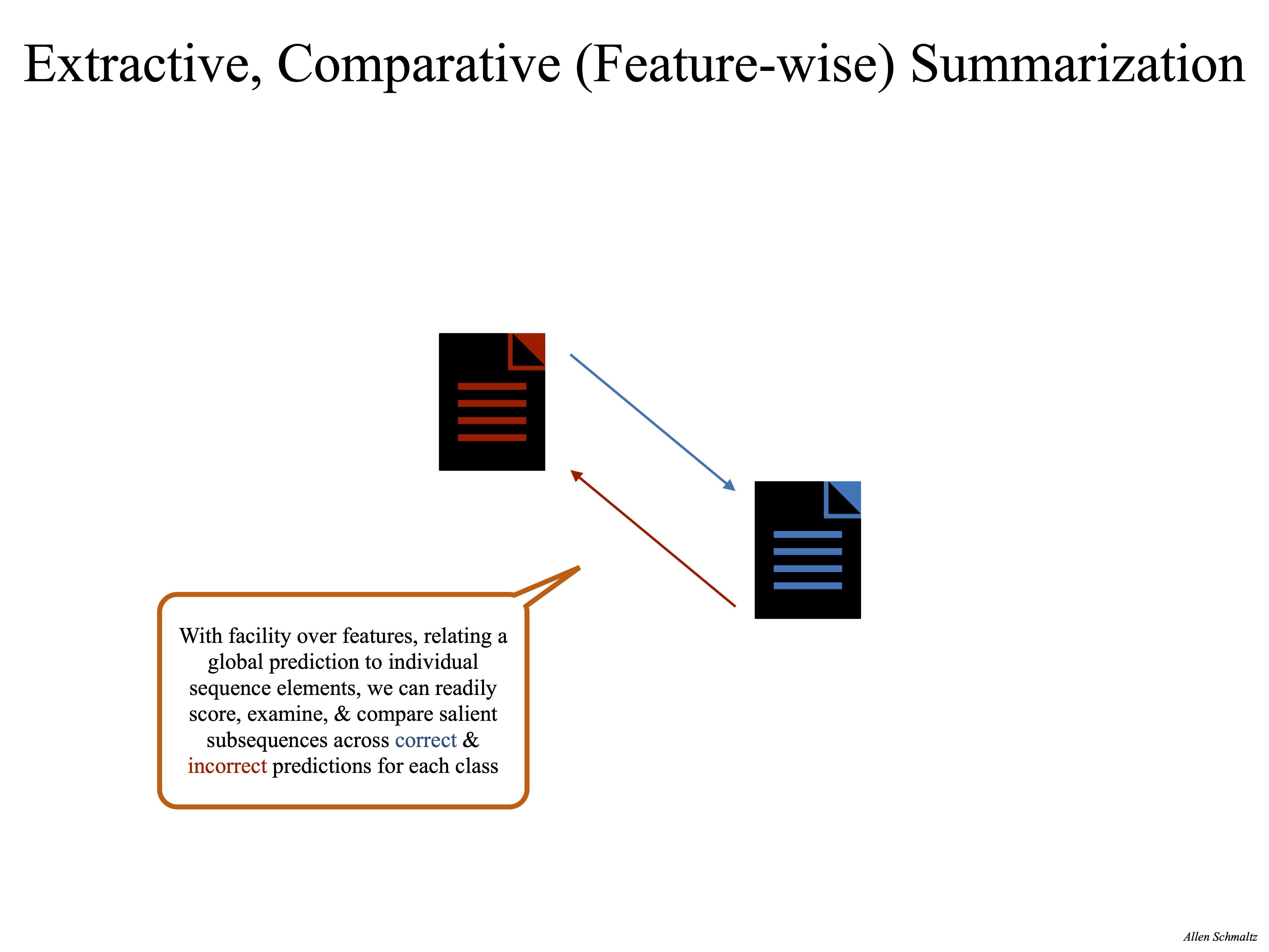

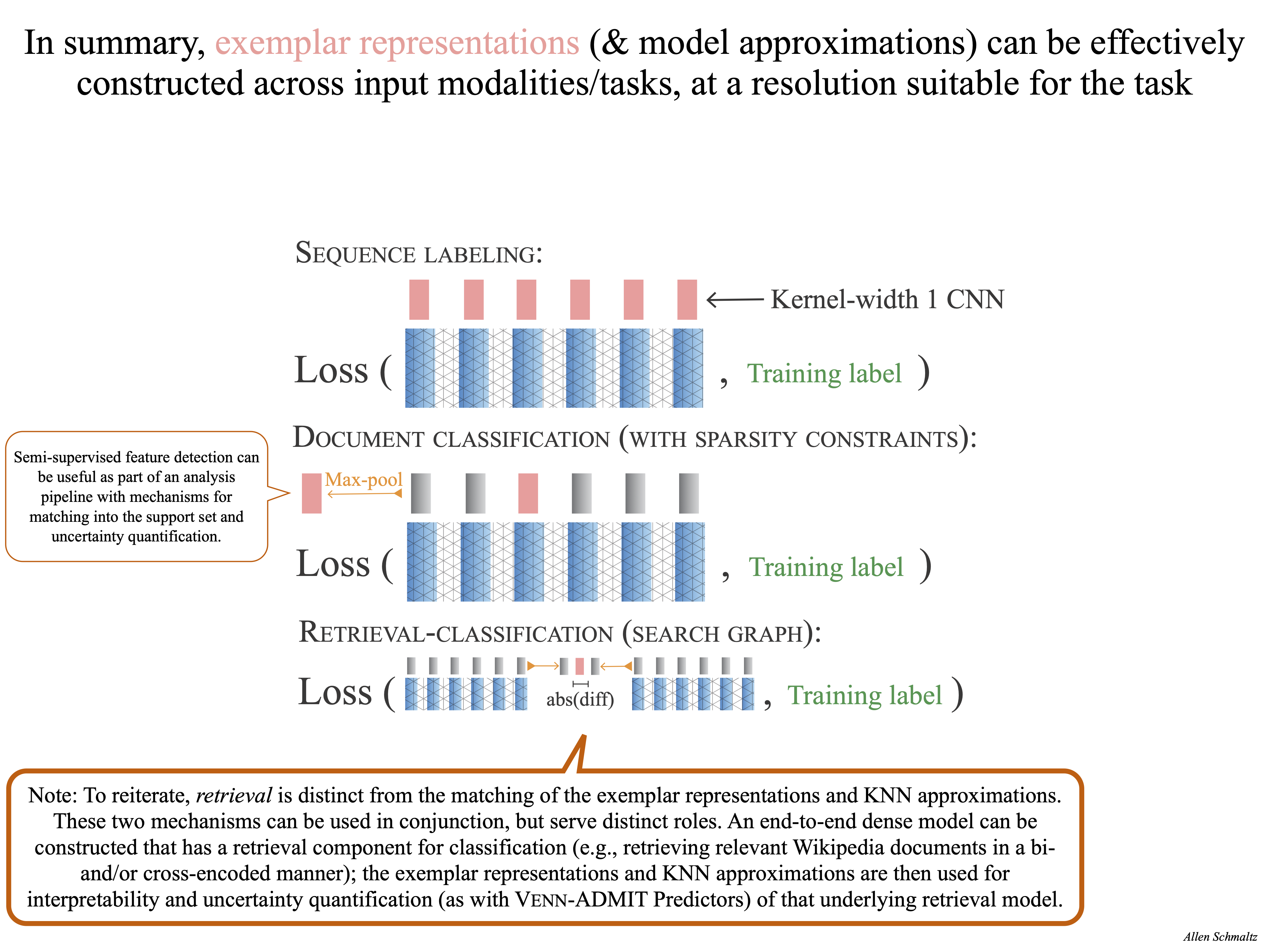

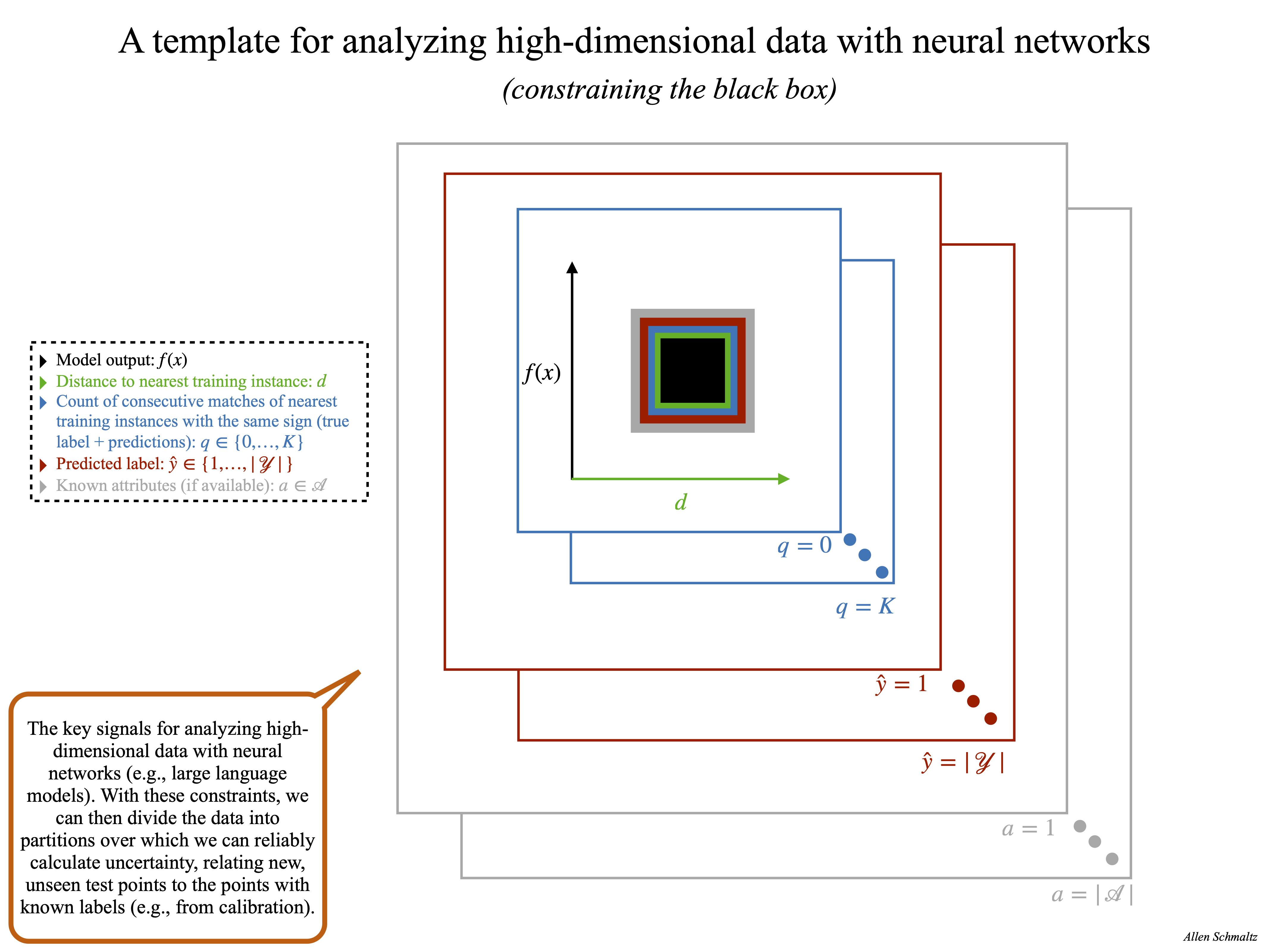

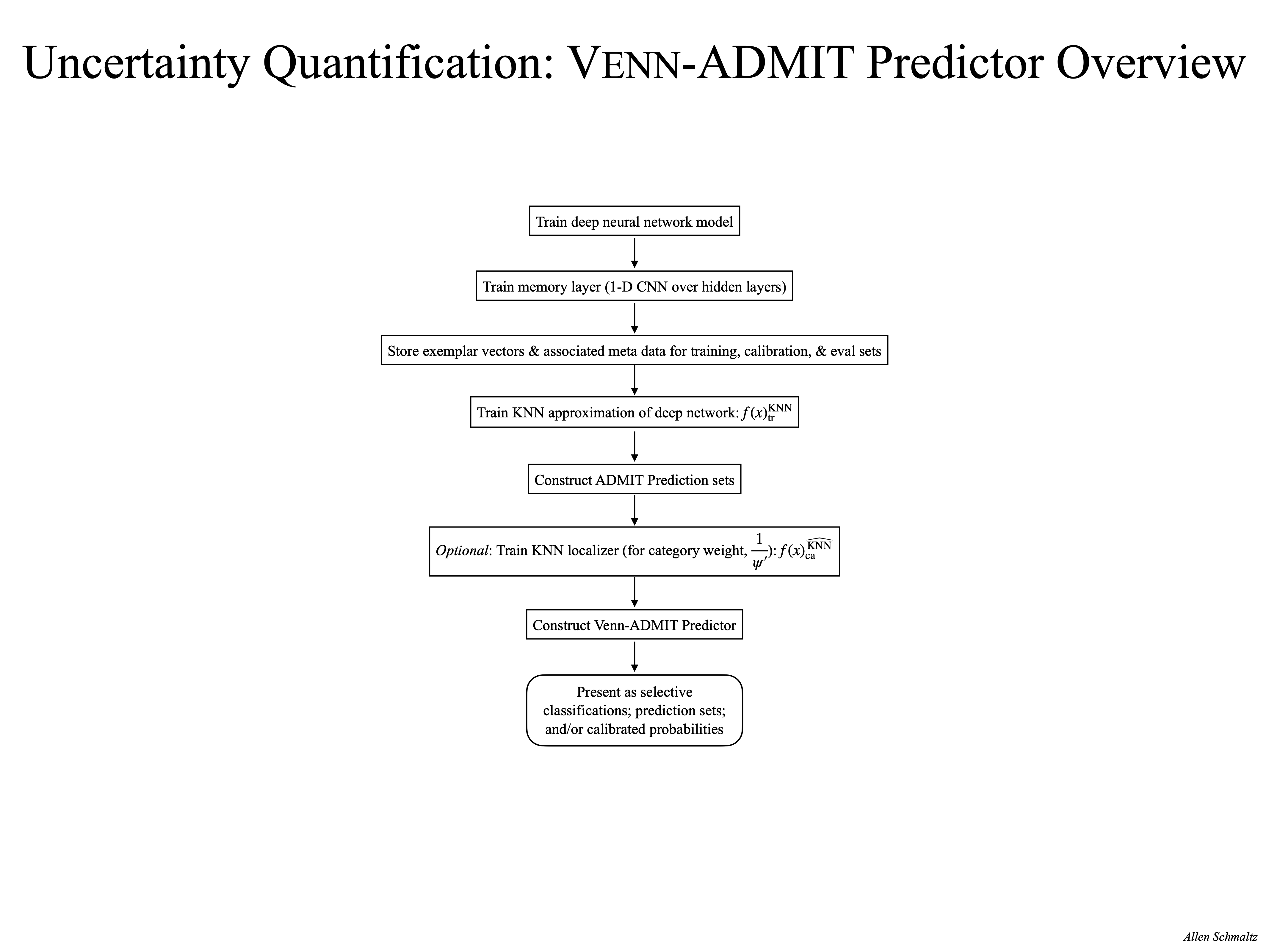

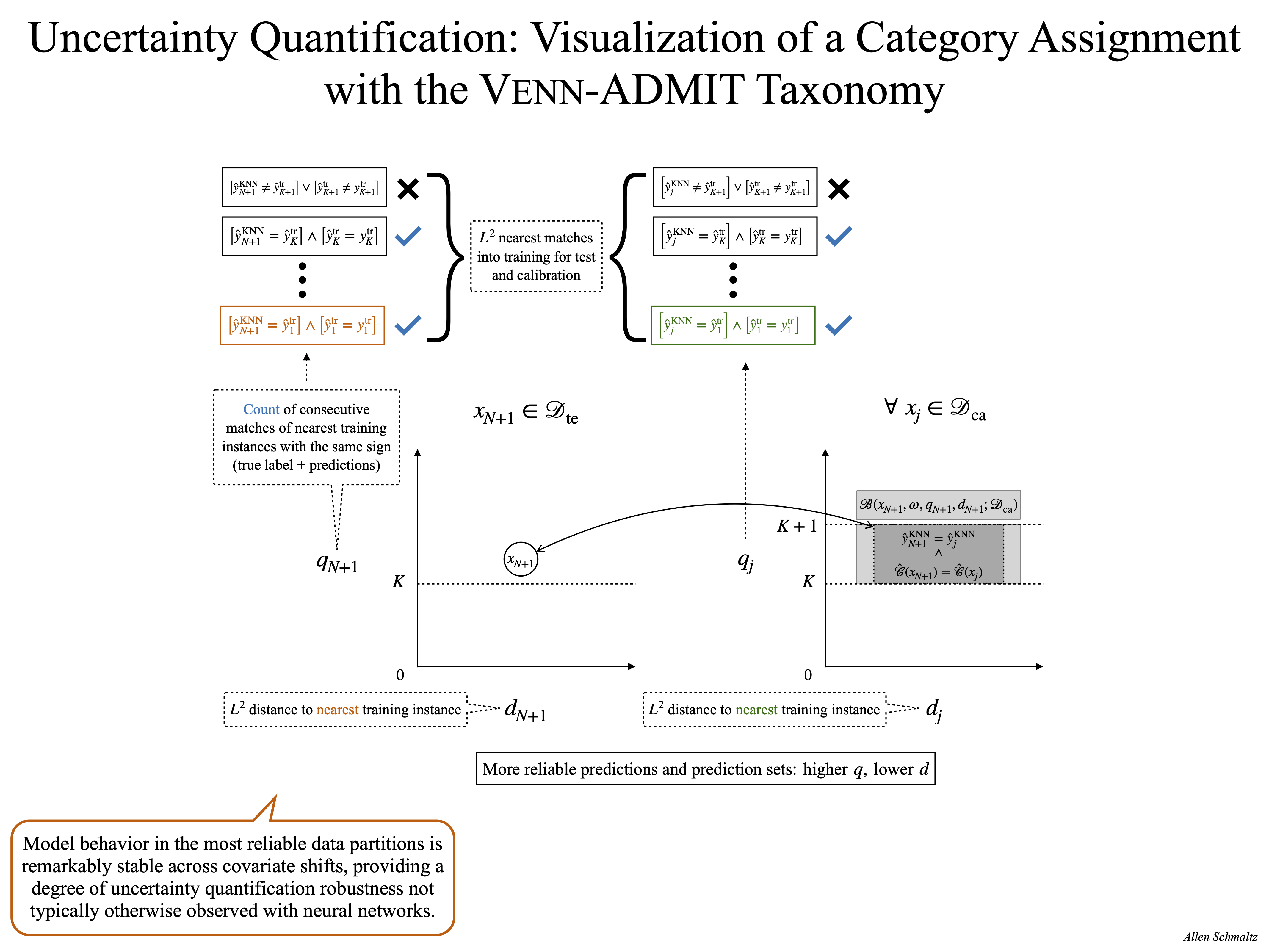

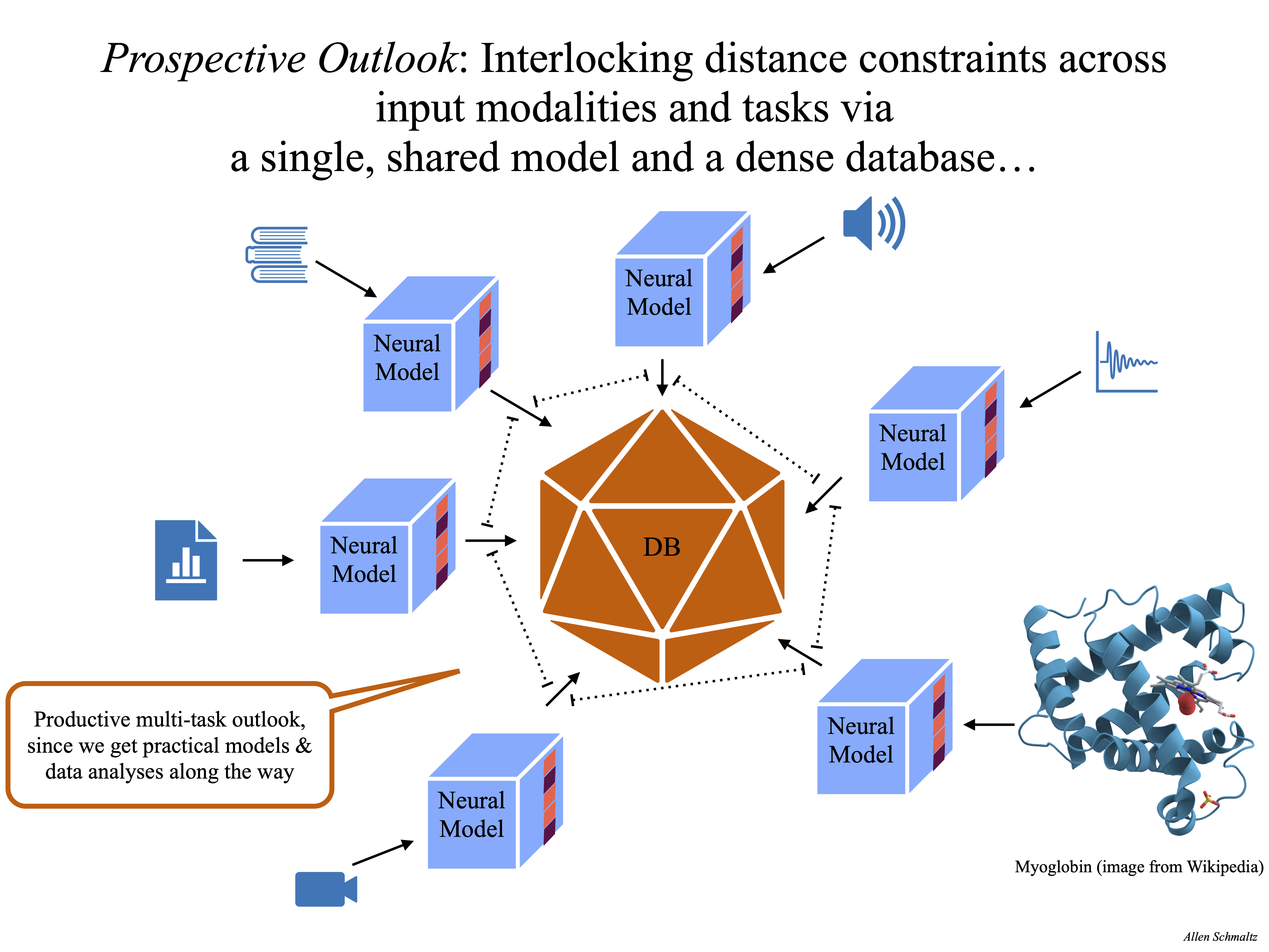

The methods I have developed add additional properties to large, otherwise blackbox, deep neural networks. These properties are critical for enabling trust in the predictions from neural networks, and trust in the ability of end-users to interpret and deploy such models: Introspection of the predictions against instances with known labels; updatability of the models without a full re-training; and robust uncertainty quantification over the predictions. My collaborations with my fantastic colleagues have yielded concrete implementations of these ideas, as detailed in our recent publications.

I have uploaded some recent presentation materials that provide an overview of my current line of research, and the images included at the bottom of this page (or here as a single PDF) summarize the key aspects.

Uncertainty is but a distance to what is known...